Anymore, one of the prevailing buzz words in education is “data.” Administrators repeat it so much, especially during professional development sessions, it’s almost like watching that Spies Like Us bit on endless repeat. But what does it all mean?! And is it actually worth the time of anyone to focus on it as endlessly as we, as educators, are now forced to?

First, you might benefit from some information on how my district (one of the lowest-performing in the state of Colorado) collects and uses data. On a state level, we are of course subjected to the Colorado Student Assessment Program, or as it is currently known, the Transitional Colorado Assessment Program (despite the fact that it is the same exact test) once per year, always in March. The data from the TCAP is delivered to the district once a year, and we, as teachers, are to use the data to inform our instruction. On a district level, every student in grades 3-10 must take the Measures of Academic Progress (MAP) tests three times a year: Fall, Winter, and Spring. Teachers receive this data three times a year, and we are expected to also use this data to inform our instruction. Finally, on a curricular level, students take end-of-unit assessments - formatted exactly the way they are on the MAP and TCAP tests - at the end of each unit. The number of units varies between the grades, but there are no less than four units for each academic year; some have six. Let me stress again that the district collects this data for students in grades 3-10. I currently teach grades 11 and 12, so I shouldn't have a tremendous issue with this. But I do. And here’s why.

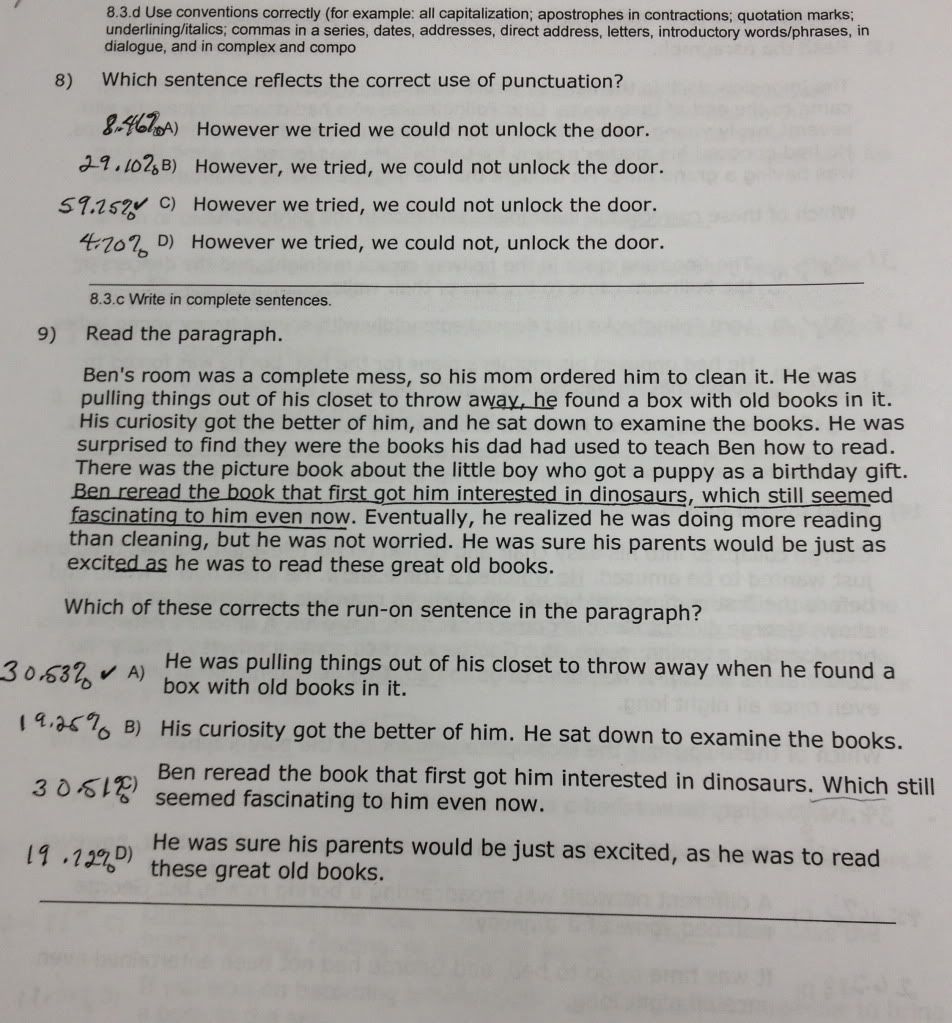

I have some great information - including a picture of a page from a district-generated test - from a recent (you guessed it) professional development I attended (which, mind you, was scheduled two days into a new semester, making it ridiculously inappropriate timing for getting my classes on track for the new semester). Organizational and calendric quibbles aside, however, my larger issues are with 1) how often the data is collected, as referenced above, and 2) the fact that it’s not any sort of reliable data whatsoever. We all know kids are tested to death right now, so I won’t belabor that point. However, I have proof - straight from the proverbial horse’s (or Director of Secondary Curriculum’s) mouth - that the way we test kids is an ineffective measure of what we teach.

I should backtrack a little bit to offer an additional explanation of the lesson planning/instructional process. The district relies heavily on curriculum documents, which provide us with specific grade-level expectation targets (GLETs) or, with the new common core standards, grade-level expected outcomes (GLEOs). We, as teachers, frame our lesson planning around these documents, fully intending that the students learn the skills (for 12th graders, such as “3.c. Create effective sentence flow and structure”) and are able to use them in a larger setting than just school.

However (to return to my original point), as you will see in the picture below, the question #9 does not - at least in an “it makes sense to an eighth grader’s logic” - assess the skill(s) in question.

So, here’s the disconnect: question #9 should be assessing the students’ ability to write in complete sentences (i.e. directing the students to choose the correct, complete sentence from the list below would be an acceptable alternative); however, in all reality, and as a roomful of English teachers agreed with me today, the way the question is posed does not assess that skill. The question assesses the students’ ability to correctly choose the best way to fix a run-on sentence. Yes, I understand; run-on sentences are not “technically” complete sentences. But here’s the problem. We’re adults. We know. The kids don’t. They’re the ones taking the test. Not us. Dis-con-nect.

Now, even worse, the test above is a test generated and delivered within the district. But, again, in the words of the DSC, “these tests mimic CSAP.” So...yes, that’s right. Within the district the students are, essentially, set up to fail. And if the district tests mimic the state tests, then...well, racing to the top just got a lot harder. One of the DSC’s solutions was to create pre-tests for use in the classroom that once again mimic the format of CSAP/TCAP; so, we would be testing our students’ ability to take tests. But we have to make sure they have the knowledge, and that’s been one of my greatest issues with testing from the get-go. I suck at multiple-choice tests. I see shades of gray. I argue my way, mentally, to indecision. I get frustrated. I give up. Some of our students do that. BUT - some of our students actually have the knowledge required. They just can’t see through the smoke and mirrors to see what the test actually wants from them. So they have to be taught how to figure out what exactly that is. But if we’re teaching them how to figure out what the test wants, when do we have the time to teach them what they need to KNOW for the test? When will we test their knowledge rather than the ability to decode a prompt?

I will say, in defense of the DSC, that she spent the afternoon seeking ideas from us as a group because she wanted to know how we can fix the “disconnect between what we teach and the way we teach it and the way we assess it to produce data,” and that she wants to find a way to figure out “how deep kids’ knowledge of sentence construction is.” Her heart is in the right place, and I had a moment today when I actually felt sorry for her, because she kept referring to the “reality” of the situation - and here I’m inferring her intent because she wouldn’t (or couldn’t) actually elaborate on what “reality” meant - that our actions, for the immediate and immediately foreseeable future, are governed by the federal programs “running” education. And, as she said repeatedly, “We can’t do nothing. We have to do something.”

But why does that “something” have to be more testing? Why can’t we teach things (i.e. sentence construction, grammar, etc.) in context, as many of us do in the Writer’s Workshops we regularly hold in our classrooms, and give the students the chance to transfer that information to a deeper knowledge that will stick with them much longer than the time it takes to fill in bubbles on repetitive tests? Our guest speaker said today (referencing second-language learners, who have to learn how to “code switch” in order to transition between roles in their lives - as son or daughter, employee, student, etc.) that we should, no matter what, never second-guess their ability to have knowledge and think. I think that idea is true for ALL students; second-language learners, advanced, special education, gifted and talented, general education, you name it. I also think that by continually forcing these kids to think only in terms of bubbles is stifling their ability to think, their ability to develop deeper knowledge that translates into all areas of their lives, rather than just focusing it on a specific task.

Bottom line? Data in its current form and usage in academia is applied in way that renders it useless. Guess more of us need to start asking why we have to use it that way.

Oh, and I just have to include “The Year in Education Cartoons.” So appropriate. Enjoy.